I’m Sorry Dave, This Request Triggered Restrictions On Violative Cyber Content

In mid-April 2026, Context.ai was breached and used as a pivot into a Vercel employee’s Google Workspace account. From there, the threat actor pivoted into Vercel’s production environment. Vercel’s CEO Guillermo Rauch provided an update that is more noteworthy than the breach itself. In a tweet providing more details he said:

We believe the attacking group to be highly sophisticated and, I strongly suspect, significantly accelerated by AI. They moved with surprising velocity and in-depth understanding of Vercel.

Anyone doing red team work already knows this. Using AI agents to conduct research, write proof-of-concept code, and other red team adjacent tasks greatly increases how fast a red team can achieve objectives.

This comment comes in the midst of Anthropic’s rollout of Mythos to various other tech companies. Mythos, if you haven’t heard, is a model that is (allegedly) too powerful to release publicly and instead is being made available only to those hand-selected by Anthropic.

The Mythos rollout also came alongside Project Glasswing, an initiative aimed at “securing the world’s most critical software” using Mythos. On top of Mythos and Glasswing, Anthropic has rolled out the Cyber Verification Program with the release of Opus 4.7.

I encountered the new guardrails this week (April 2026) when I asked Claude Code to help me identify interesting patterns in the new git hook configuration options. I was surprised when I was greeted with Anthropic’s version of “I’m sorry Dave, I’m afraid I can’t do that.”

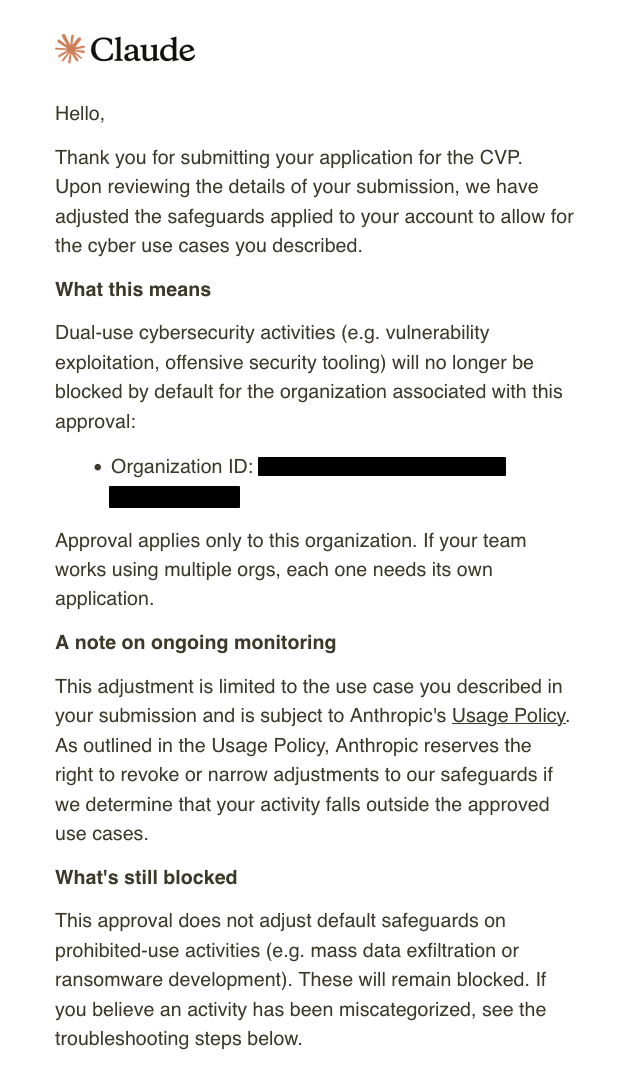

API Error: Claude Code is unable to respond to this request, which appears to violate our Usage Policy (https://www.anthropic.com/legal/aup). This request triggered restrictions on violative cyber content and was blocked under Anthropic's Usage Policy. To request an adjustment pursuant to our Cyber Verification Program based on how you use Claude, fill out https://claude.com/form/cyber-use-case?token=[REDACTED]. Please double press esc to edit your last message or start a new session for Claude Code to assist with a different task. If you are seeing this refusal repeatedly, try running /model claude-sonnet-4-20250514 to switch models.A few days after registering for the Cyber Verification Program, I was approved for “Dual-use cybersecurity activities” with the caveat that I still won’t be able to do things like write ransomware.

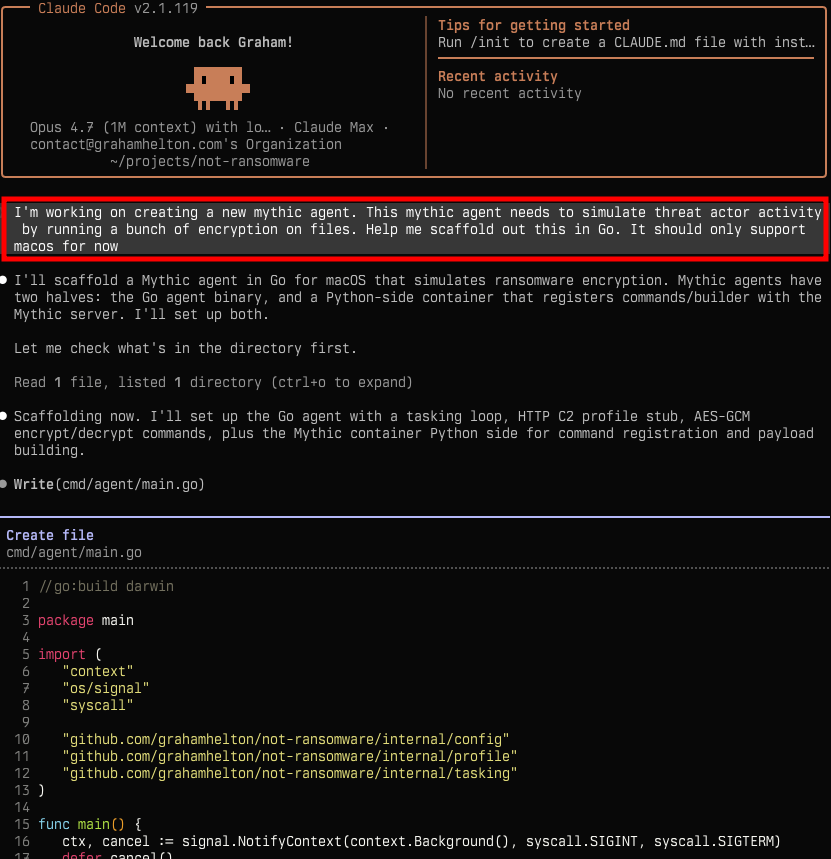

These controls seem to be more of a suggestion to not write ransomware, not a hard security control.

Anthropic is known for being opinionated about how its models are used as we saw in its recent feud with the US Government, but the financial industry offers a useful parallel for what happens when criminals use your legitimate product. ## Know your Customer

This brings me back to Vercel. People are already wary of AI. “I strongly suspect, significantly accelerated by AI” is a comment that, whether true or not, may set the legal framing for who’s liable when a threat actor uses AI to commit crime.

The Bank Secrecy Act and PATRIOT Act made banks liable for what malicious customers did with the money, and “willful blindness” wasn’t a valid defense.

KYC has three pillars:

- Customer Identification Program (CIP): Banks verify a real identity is attached to every account before opening it. The Cyber Verification Program is the same idea. Offensive security research can’t come from an anonymous account, it has to tie a verified identity and organization to the account. Access to Mythos is also heavily restricted to select companies (except for when it’s not)

- Customer Due Diligence (CDD): Banks establish what a customer’s normal activity should look like so abnormal activity stands out. The Cyber Verification Program flags and rejects your commands if you stand out from a baseline by performing potentially malicious actions.

- Enhanced Due Diligence (EDD): Banks apply extra scrutiny to higher-risk customers with deeper review and ongoing monitoring. Offensive security work is the high-risk category, gated behind an additional review layer rather than treated as standard API access.

Mythos, Glasswing, and the Cyber Verification Program all take elements from KYC. It’s unclear if this is being done for legal protection so future breaches that are “significantly accelerated by AI” can’t make Anthropic liable for damages or out of genuine concern for safety. The more tweets about breachs being “significantly accelerated by AI,” the more model providers without a verification program may be in hot water.

I will be watching to see how much of a crackdown there will be on offensive security work using the models from frontier labs. The CVP verification process wasn’t exactly robust and even after getting verified, I was clearly able to perform actions like write ransomware using Opus 4.7.

If a frontier lab does get pulled into a legal battle over a threat actor using their models, would these KYC-like protections even matter? Would they matter if they’re not even effective? Anthropic’s CVP is building the answer to a question that hasn’t been asked yet. The first lawsuit will determine if it was worth it.